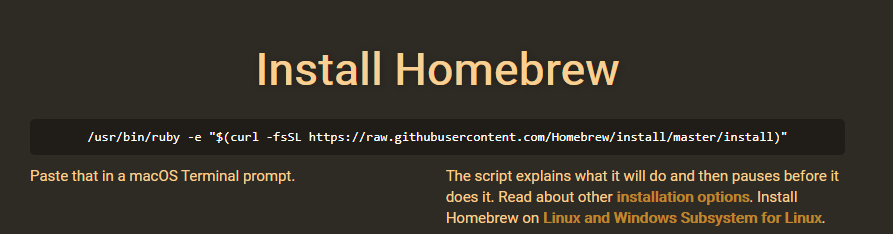

Follow these steps below to see how you can install Apache Spark on your Mac:īefore installing Apache Spark, you need to have Java, Homebrew, and other requisites for the proper functioning of your Apache Spark on your Mac.

HOW TO INSTALL SPARK ON MAC MAC

However, installing Apache Spark on your Mac is not a one single package installation and can require previous checks and installation in different steps. If it is analyzing datasets, using machine learning, or even using Python in other development areas, having pre-requisites for the same is essential for your Mac. If your field of work consists of analytics or Python development, being able to practice and work on PySpark becomes a daily part of your life. 1.3 Installing Scala and other prerequisite packages:.You only need to make sure you’re inside your pipenv environment. To start Pyspark and open up Jupyter, you can simply run $ pyspark.

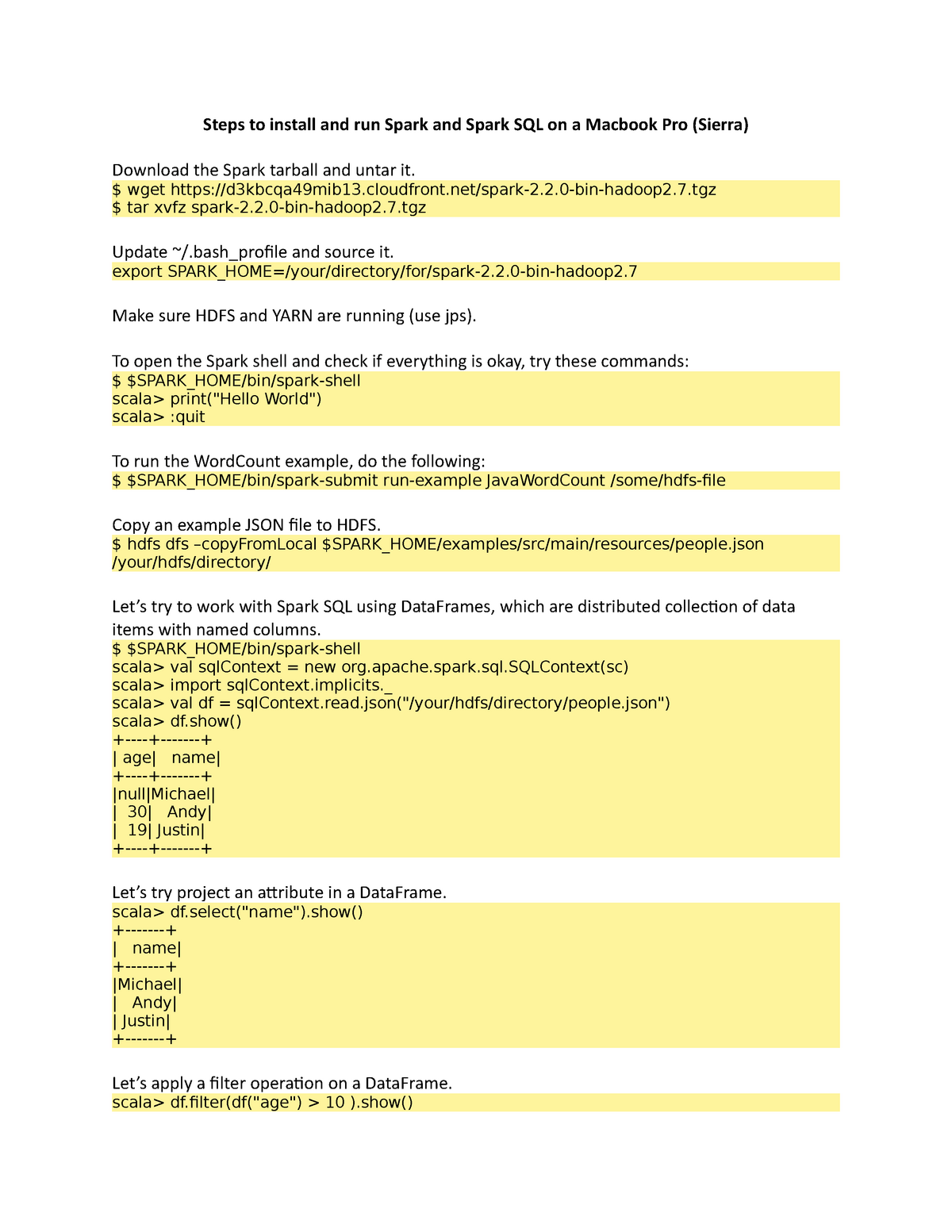

Now you save the file, and source your Terminal: Your ~/.bashrc or ~/.zshrc should now have a section that looks kinda like this: 172 # Sparkġ79 export PYSPARK_DRIVER_PYTHON_OPTS='notebook'ġ80 export PYSPARK_PYTHON=python3 # only if you're using Python 3 Java installation is one of the mandatory things in installing Spark.

If you want to use Python 3 with Pyspark (see step 3 above), you also need to add: export PYSPARK_PYTHON=python3 Apache Spark - Installation Step 1: Verifying Java Installation. Now tell Pyspark to use Jupyter: in your ~/.bashrc/ ~/.zshrc file, add export PYSPARK_DRIVER_PYTHON=jupyterĮxport PYSPARK_DRIVER_PYTHON_OPTS='notebook'

HOW TO INSTALL SPARK ON MAC HOW TO

You can also use Spark with R and Scala, among others, but I have no experience with how to set that up. This tutorial has used /DeZyre directory Change. is a bit of a hassle to just learn the basics though (although Amazon EMR or Databricks make that quite easy, and you can even build your own Raspberry Pi cluster if you want…), so getting Spark and Pyspark running on your local machine seems like a better idea. Step-by-Step Tutorial for Apache Spark Installation Change to the directory where you wish to install java.

Setting up your own cluster, administering it etc. Whether it’s for social science, marketing, business intelligence or something else, the number of times data analysis benefits from heavy duty parallelization is growing all the time.Īpache Spark is an awesome platform for big data analysis, so getting to know how it works and how to use it is probably a good idea.